IECE Journal of Image Analysis and Processing

ISSN: 3067-3682 (Online)

Email: [email protected]

Synthetic Aperture Radar (SAR) is a remote sensor capable of capturing high-resolution images in all weather conditions, independent of external light sources like the sun [1]. Automatic Target Recognition (ATR) for SAR has been widely researched, aiming to classify targets from SAR images into predefined classes using decision engines [2]. This paper focuses on enhancing target identification using an ensemble classification approach.

The typical SAR ATR process consists of three stages: target detection, discrimination, and recognition [3]. Detection involves segmenting the target area from the image to remove background noise, while discrimination extracts key features to represent the target. During recognition, these features are classified, and the results from multiple classifiers are combined using an ensemble method to determine the final target class [4].

There are three primary SAR ATR approaches: template matching, machine learning, and model-based techniques [5]. In this work, we combine template matching with two machine learning methods—Support Vector Machine (SVM) and Convolutional Neural Network (CNN)—using majority voting to improve classification performance.

SVM, a supervised learning model, excels in classification by finding the optimal hyperplane that separates target classes [6]. CNNs, known for their strong image classification capabilities, extract general and specific features through different layers of the network. However, CNNs are more effective under standard operating conditions (SOC) and struggle with extended operating conditions (EOC) beyond the training data [3]. Template matching compares target features with stored templates but requires high computational resources and manual feature engineering [5].

Although this paper does not use a model-based approach, such methods typically involve real-time feature construction from models representing target shapes, which is suitable only for specific cases.

By using majority voting to combine CNN, SVM, and template matching, we demonstrate that an ensemble approach can further improve classification accuracy [7]. The Moving and Stationary Target Acquisition and Recognition (MSTAR) dataset, which includes ten classes of ground targets, is used to validate this method.

We have the following contributions in this paper;

This paper introduces an innovative ensemble method for SAR Automatic Target Recognition (ATR) by integrating AlexNet, SVM, and template matching, which collectively improves classification accuracy under varying operational conditions (SOC and EOC).

The study proposes a robust preprocessing method that includes threshold segmentation with histogram equalization and morphological filtering, ensuring accurate region-of-interest extraction in SAR images.

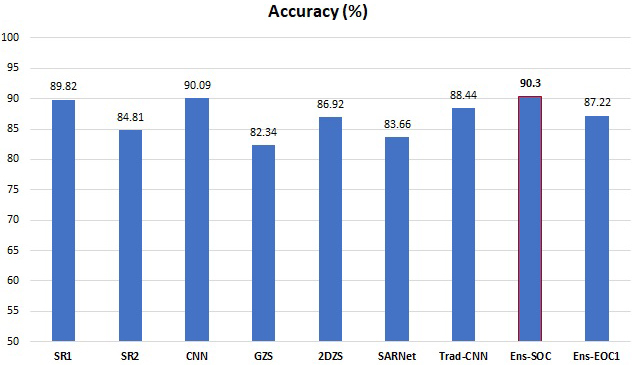

By combining three distinct classification techniques with majority voting, the proposed ensemble approach achieves higher accuracy than individual classifiers, with average accuracies of 90.30% in SOC and 87.22% in EOC-1.

The paper validates the ensemble model on the widely-used MSTAR dataset, demonstrating its effectiveness across multiple target types and conditions, thereby providing a valuable benchmark for SAR ATR research.

Detailed experimental analysis highlights the strengths and limitations of each classifier (AlexNet, SVM, and template matching) in different scenarios, offering insights into their behavior under standard and extended operating conditions.

This study supports the advancement of SAR ATR technology by demonstrating the effectiveness of ensemble and fusion methods, encouraging further exploration into ensemble-based approaches for robust SAR target recognition.

The remainder of this paper is organized as follows: Section 2 reviews related work, Section 3 presents the proposed methodology, including target region extraction and recognition. Evaluation Metric is discussed in Section 4, Section 5 details the experiments on the MSTAR dataset. Section 6 provides the conclusion.

Various machine learning techniques have been employed to address SAR ATR challenges. For instance, reference [8] utilized Support Vector Machine (SVM) for classification along with a radial method for feature extraction. However, these approaches require manually designed features and are limited by subjective biases and generalization capabilities. Recently, several CNN variations have been adapted for SAR ATR. Notably, reference [9] introduced SARNet, a lightweight CNN-based classification model, while reference [10] proposed an unsupervised deep learning model based on encoding–decoding architecture. Additionally, reference [11] developed a CNN-based SAR ATR method using attributed scattering centers (ASC) for target reconstruction, and reference [5] introduced a super-resolution generative adversarial network (SRGAN) combined with deep convolutional neural networks (DCNN) for SAR ATR.

Challenges remain, such as insufficient labeled training data for CNNs in SAR classification tasks [10, 13, 14] and the impact of speckle noise on extracted features [12]. Increasing the number of layers in CNNs also leads to overfitting, making it difficult to converge to optimal solutions [5]. Template matching, another SAR ATR technique, involves comparing extracted features with various template classes. For example, reference [2] applied the Euclidean distance transform on binary target regions to enhance matching accuracy, while reference [15] reconstructed targets based on ASCs. However, noise and occlusions can lead to missing or incorrect scattering centers, complicating matching processes [17, 18, 19, 20, 25].

| Features | Description |

|---|---|

| Dataset Name | MSTAR |

| Availability | Freely obtainable from the USA Air Force’s Sensor and Data Management System (SDMS) |

| Research Importance | Widely used in literature for assessing techniques against previously published methods [1] |

| Vehicle Classes | D7, BRDM2, T62, 2S1, BTR70, T72, ZSU23-4, ZIL131, BTR60, BMP2 |

| Aspect Coverage | to |

| Depression Angles Available | , , , [10] |

| Dataset Division | Split into training and testing sets based on radar’s depression angle |

| Training Set Depression Angle | |

| Testing Set Depression Angles | and [6] |

Ensemble classifiers have been widely employed to enhance efficiency in Synthetic Aperture Radar (SAR) Automatic Target Recognition (ATR) by synergistically combining multiple complementary approaches. Early works, such as references [21, 24], introduced hierarchical fusion techniques that integrated handcrafted features like Principal Component Analysis (PCA), target outlines, and attributed scattering centers (ASCs). Similarly, reference [22] combined features derived from methods such as elliptical Fourier descriptors (EFDs) and local binary patterns (LBP). Recent advances have expanded these ensemble paradigms by incorporating deep learning frameworks. For instance, Zhang et al. [26] proposed a cascaded CNN architecture fused with AdaBoost and Rotation Forest, leveraging both deep-learned and ensemble strategies to address small sample recognition challenges. Likewise, Xue et al. [27] developed a heterogeneous CNN ensemble, combining diverse network architectures to improve robustness against speckle noise and geometric distortions.

Beyond ensemble methods, sparse representation techniques have gained traction for their ability to handle noise and occlusions. He et al. [31] introduced a multidimensional sparse model to enhance discrimination under varying imaging conditions, while Zhang et al. [32] and Gishkori et al. [37] explored sparse coding of Zernike and pseudo-Zernike moments, respectively, to balance invariance and discriminative power. These methods aim to mitigate limitations of traditional handcrafted features through adaptive sparse representations. Meanwhile, efforts to optimize handcrafted descriptors persist, such as Bolourchi et al. [36], who utilized Fisher scores to select moment-based features, and Gorovyi et al. [33], who streamlined feature extraction for real-time classification. Comparative studies, like Belloni et al. [35], further evaluate the efficacy of diverse feature sets in SAR ATR scenarios.

The shift toward data-driven approaches is evident in works like Coman [34], who demonstrated the superiority of CNNs over classical methods on the MSTAR dataset, and Zaied et al. [38], who combined convolutional networks with auto-encoders for robust feature learning. Despite these advancements, many existing techniques still rely on handcrafted features, which struggle with invariance under speckle variation and partial occlusions [6, 23]. While recent innovations in deep learning and sparse modeling—such as those in [26, 27, 31, 34, 37, 38]—offer promising alternatives, challenges remain in balancing computational efficiency, interpretability, and generalization across diverse SAR operating conditions. The field continues to evolve, with hybrid frameworks that merge learned and engineered features likely to dominate future research.

To improve SAR ATR performance for ground vehicle classification, this study employs an ensemble classifier that integrates SVM, CNN, and template matching. By leveraging the strengths of each method while addressing their limitations, this ensemble approach aims to enhance overall classification accuracy.

The SAR Automatic Target Recognition (ATR) problem encompasses varying degrees of complexity that enhance military capabilities. While functional SAR ATR systems are currently operational, the primary challenge lies in improving their effectiveness in more complex scenarios [29].

This study proposes a methodology to develop an improved classifier ensemble technique that combines CNN (AlexNet), SVM, and template matching. The approach consists of several key steps to enhance classification performance.

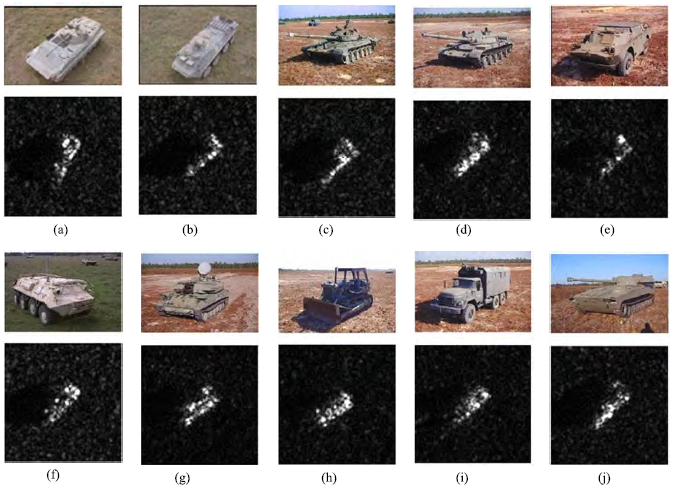

In this study, the MSTAR dataset is used, the Table 1 presents the key aspects, usage, and specifications of the MSTAR dataset, while the optical and SAR images are presented in Figure 1.

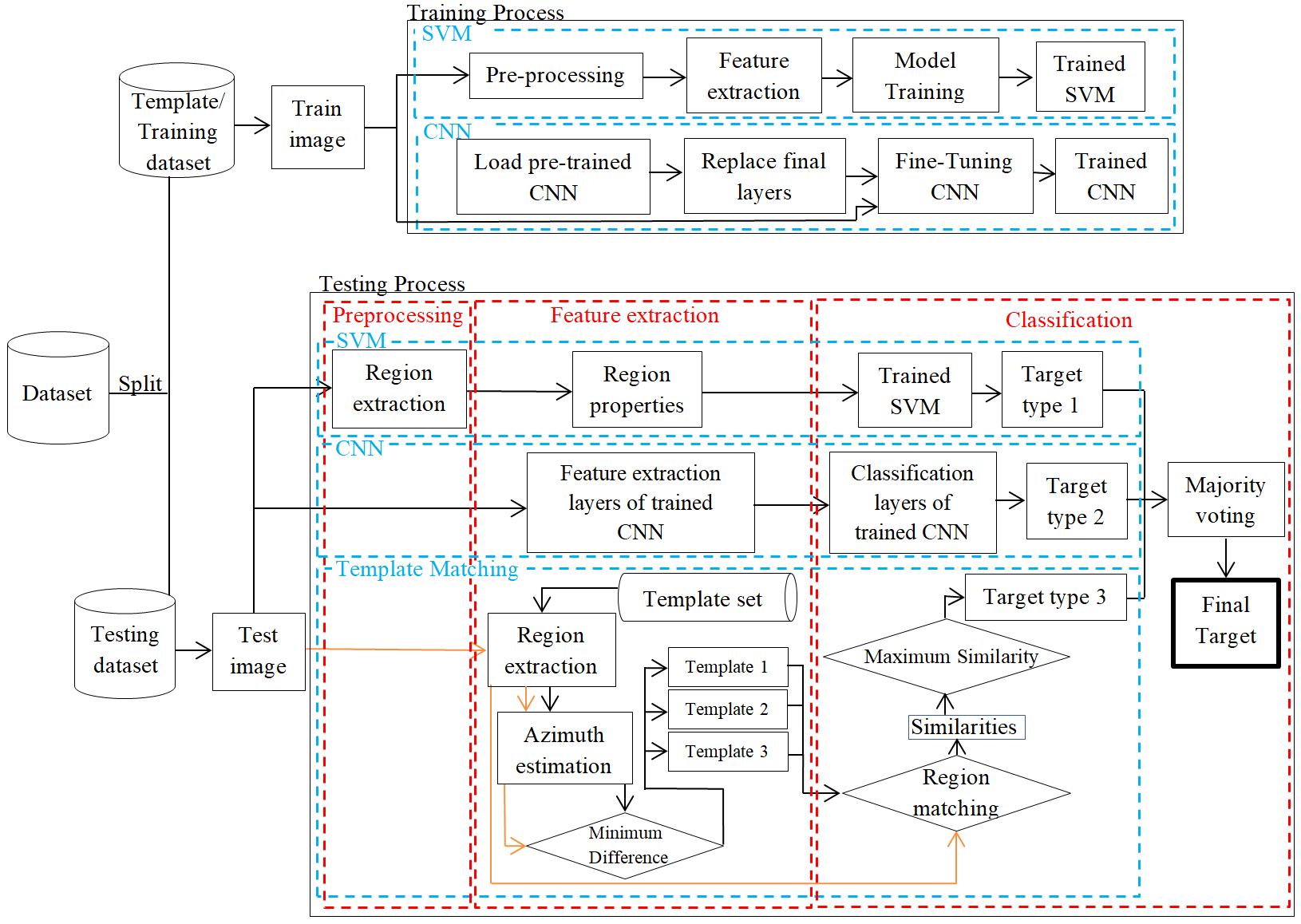

The block diagram in Figure 2 provides an overview of the methodology, highlighting the key stages involved. In the following subsections, we provide a detailed discussion of each stage, including the preprocessing steps, feature extraction process, and classification techniques.

In this work, different techniques are employed, each requiring distinct pre-processing steps. As such, the pre-processing procedures for each technique are discussed separately. Specifically, we will explore the pre-processing steps for SVM, CNN, and Template Matching individually.

Support Vector Machine SAR images often contain noisy backgrounds that must be removed before further processing [7]. To reduce noise and ensure consistency across the MSTAR dataset, all image chips were resized to a standard 128x128 pixel resolution [6]. Various techniques were then applied to extract the binary target region, which is discussed below.

Extraction of Binary Target Region Before feature extraction, the binary target region is extracted using a target segmentation technique. The detailed segmentation process in this study consists of the following steps:

The intensities of the original image are normalized to the range [0, 1] using the standard histogram equalization technique [16].

A 5×5 averaging filter is applied to the equalized image.

The smoothed image is segmented using a normalized threshold of 0.8 [7].

False alarms caused by noise are removed using the morphological opening operation [16].

A binary morphological closing operation is performed to fill any holes and connect the target area [16].

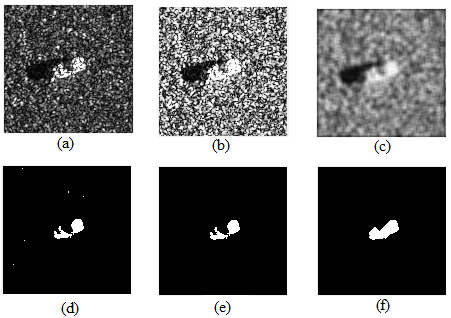

The Figure 3 illustrate the process as;

Figure 3a is an original SAR image of the BTR70 tank from the MSTAR dataset.

Figure 3b illustrates an Image after histogram equalization, adjusting intensities.

Figure 3c is a smoothed image after applying a 5×5 average filter.

Figure 3d is a preliminary segmentation, showing false alarms caused by noise.

Figure 3e shows the image after applying the morphological opening operation with a pixel threshold of 30, effectively removing small noise areas.

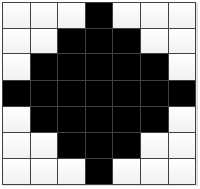

Figure 3f is a final binary target region achieved after applying morphological closing with a 7×7 diamond structure element, as shown in Figure 4.

In image processing, feature extraction is the process of obtaining relevant features from a large set of image data, while retaining as much informative content as possible. Local features refer to distinct structures or patterns found in an image, such as edges, small image patches, or key points [28]. The goal of feature extraction is to generate low-dimensional representations of the original SAR images, while preserving the discriminative information necessary to differentiate between various targets [3].

The feature extraction strategies for each method are outlined below.

Support Vector Machine For effective target discrimination, the extracted features should exhibit physically diverse properties [30]. In this study, the MATLAB regionprops function was used, which is capable of extracting various region properties. The properties utilized in this study are listed in Table 2, along with their descriptions.

| Property Name | Description |

|---|---|

| Area | The total number of pixels in the area. |

| MajorAxisLength | The ellipse main axis length in pixels and return the length value as a scalar. |

| MinorAxisLength | The ellipse minor axis length in pixels and return the length value as a scalar. |

| Circularity | This property determines the object’s roundness. |

| ConvexArea | The total number of pixels in "ConvexImage" and return the value as a scalar. |

| Eccentricity | The distance ratio among ellipse foci and the length of the major axis. Zero eccentricity means a circle, and one is a line segment. |

| EquivDiameter | It returns a scalar value that represents the diameter of a circle with the same area as the region, which can be calculated using the formula sqrt(4*Area/pi). |

| Extent | The extent property is used to find the ratio of the pixel in the region to the total bounding box pixels; it returns the value as a scalar. calculated by dividing the area by the bounding box area. |

| FilledArea | It returns the scalar value of the total number of on pixels in the filled image. |

| MaxFeretDiameter | The maximum feret diameter is determined by finding the maximum distance between any two boundary points on the antipodal vertices of the convex hull that surrounds an object. |

| MaxFeretAngle | It finds the angle of maximum feret diameter relative to the horizontal axis of the image. |

| MinFeretDiameter | The minimum feret diameter is determined by finding the minimum distance between any two boundary points on the antipodal vertices of the convex hull that surrounds an object. |

| MinFeretAngle | It finds the angle of minimum feret diameter relative to the horizontal axis of the image. |

| Orientation | Angle, returned as a scalar, between the x-axis and the major axis of the ellipse that shares the same second moments as the region. |

| Perimeter | It finds the distance around the boundary of the target and returns it as a scalar. |

| Solidity | It finds the proportion of the pixels in the convex hull that are also in the region. calculated as area/convex area. |

Convolutional Neural Network (AlexNet) A Convolutional Neural Network (CNN) consists of two primary components: feature extraction and classification. CNNs automatically learn to extract features from input images as they are processed through the network. Different layers of the network extract varying types of features based on their position. Early layers capture low-level features such as edges and textures, while deeper layers extract more abstract, high-level features, such as parts of objects and complex patterns [6]. After feature extraction, the resulting features are passed to the fully connected layers for classification.

Template MatchingFor template matching, the binary target regions were used as features. The same process, as shown in Figure 3, was then repeated.

During the classification stage, each technique predicts the targets. The classification process for each technique is described below.

Support Vector Machine In the classification stage, the extracted features were fed into a trained SVM to predict the initial target. During training, various kernel functions were tested, with the polynomial kernel yielding the best results. Consequently, the SVM with a polynomial kernel was used to evaluate performance. The predicted target from the SVM was then passed to the majority voting stage, which serves as the final step in target prediction.

Convolutional Neural Network (AlexNet)As previously mentioned, a CNN consists of two main components: feature extraction and classification. The features extracted by the first component are passed to the classifier for the initial classification. By default, CNNs use a softmax classifier. After the initial classification by the trained softmax classifier, the predicted class is passed to the majority voting stage for final classification.

Template MatchingThe test images were used as templates. The template-matching algorithm for SAR image classification consists of the following steps:

After obtaining results from template matching, AlexNet, and SVM, these results were integrated using the majority voting method to determine the final outcome. The experimental results confirmed that combining multiple classifiers through majority voting leads to improved accuracy.

The performance of the proposed model is evaluated using accuracy, which is defined as:

The proposed ensemble approach combines SVM, AlexNet, and template matching on the MSTAR dataset in both SOC and EOC conditions. Initially, each technique is applied separately to the dataset, producing individual results. These results are then integrated using majority voting to obtain the final outcomes for both SOC and EOC1.

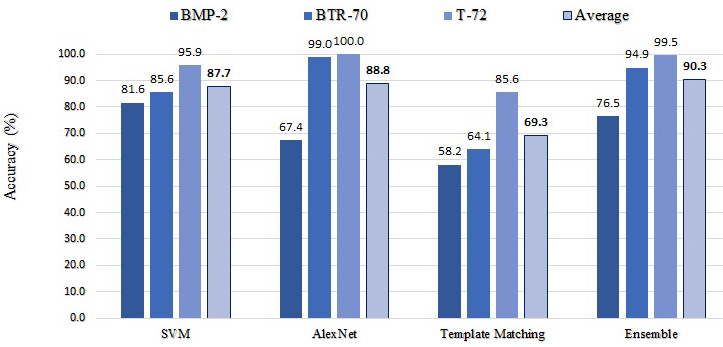

Experimental Results under SOC To verify the effectiveness and robustness of the proposed method under SOC, we considered the 3-class recognition problem, where the three targets, i.e., T72, BTR70, and BMP2, are used. As a result, it is a perfect SOC under this scenario since there is only a depression angle difference between the test and training/template samples. Images with a depression angle of are used for training or templates, whereas images with a depression angle of are used for classification. The training/template set and test set under SOC are described in Table 3.

| Target Class | Template/Training set | Test set | |||

|---|---|---|---|---|---|

| Depression | No.Images | Depression | No.Images | ||

| BMP2(SN-C21) | 17° | 233 | 15° | 196 | |

| BTR70 | 17° | 233 | 15° | 195 | |

| T72(SN-812) | 17° | 231 | 15° | 195 | |

| Target Class | Template/Training set | Test set | |||

|---|---|---|---|---|---|

| Depression | No.Images | Depression | No.Imageses | ||

| 2S1 | 17° | 271 | 30° | 283 | |

| BRDM2 | 17° | 281 | 30° | 278 | |

| ZSU23-4 | 17° | 265 | 30° | 276 | |

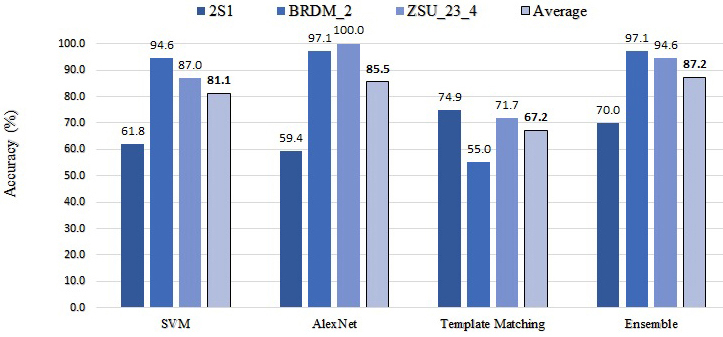

Experimental Results under EOC-1 For SOC, there was a difference between the depression angles in the training and test sets, but the difference was not significant. In order to verify the effectiveness and robustness of the proposed method under EOCs, we considered EOC-1, the large depression angle problem, where the three targets, i.e., 2S1, BRDM2, and ZSU23-4, are used. Images with a depression angle of are used for training or templates, whereas images with a depression angle of are used for classification. The training/template set and test set under EOC-1 are described in Table 4.

The performance of four classifiers SVM, CNN, Template Matching, and Ensemble (Majority Voting) was evaluated across three vehicle classes: 2S1, BRDM2, and ZSU23-4. Figure 6 illustrates the accuracy and average accuracy of individual classifiers and their ensemble under EOC1. SVM achieved an average accuracy of 81.13%, with the highest performance on BRDM2 (94.60%) but lower accuracy for ZSU23-4 (86.96%) and 2S1 (61.84%). CNN showed a similar trend, with an average accuracy of 85.50%: it performed well on BRDM2 (97.12%) and ZSU23-4 (100%), but had lower accuracy for 2S1 (59.36%). Template Matching, with an overall accuracy of 67.23%, was the weakest classifier, especially for BRDM2 (55.04%) and ZSU23-4 (71.74%), while its accuracy for 2S1 was better (74.91%). The Ensemble method, utilizing majority voting, improved performance significantly, achieving an average accuracy of 87.22%, with strong results for BRDM2 (97.12%) and ZSU23-4 (94.57%), while maintaining a reasonable accuracy for 2S1 (69.96%).

The SAR ATR field faces significant challenges due to varying operational conditions, with individual techniques often yielding suboptimal results, especially under EOCs. This study introduces a novel ensemble approach, combining CNN, SVM, and template matching through majority voting to improve classification performance. Experimental results show that CNN outperforms both SVM and template matching under SOC and EOC-1, with template matching achieving better results than the other techniques for the 2S1 target in EOC-1. By applying majority voting, the classification accuracy improved to 90.30% under SOC and 87.22% under EOC-1. These findings demonstrate that ensemble techniques, by leveraging the strengths of multiple classifiers, can significantly boost performance, highlighting the effectiveness of fusion methods in SAR ATR systems.

Future work suggests that, while ensemble techniques are computationally expensive, efforts could focus on reducing computational costs and improving response time by integrating these methods into a unified framework, potentially eliminating the need for majority voting. Moreover, advancements in pre-processing and feature extraction techniques for both SVM and template matching could reduce computational overhead while enhancing classification accuracy, particularly for template matching. These improvements would optimize both the efficiency and accuracy of the system, making it more suitable for practical, real-time SAR ATR applications.

Copyright © 2024 by the Author(s). Published by Institute of Emerging and Computer Engineers. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/), which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made.

Copyright © 2024 by the Author(s). Published by Institute of Emerging and Computer Engineers. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/), which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made.

Portico

All published articles are preserved here permanently:

https://www.portico.org/publishers/iece/